In October, I gave a presentation at BarCamp Philadelphia titled Building Better API Documentation. This post is based on that presentation.

Despite my English major background, I’m a big nerd. I program as a hobbyist and I program for work. But specifically, it’s my work—documenting a web hosting service—that puts me in contact with a lot of different Application Programing Interfaces (APIs, how different pieces of software “talk” to each other). And having seen such a variety of APIs, I’ve come to this sad conclusion: most APIs have documentation which is useless unless you’re already familiar with that API.

This applies across all kinds of software: open source, proprietary, internal, external, web APIs, library APIs, and more. It’s a widespread and sorry state of affairs. Especially when I stop and consider that, as a user of APIs, it makes me really happy to be a user and to be a customer when I have access to good documentation. It means that a lot of APIs are making me unhappy. So I want to help developers and writers be happier by building better API documentation.

Now, it’s probably bad for my profession to put this idea into your mind, but doing the right thing isn’t hard. It’s just that doing the wrong thing—creating bad documentation—is so much easier. I can look at API docs and find all manner of mistakes in minutes, just because it is that easy. I make some of these mistakes myself. But there are a few things that can be done to avoid the biggest, most glaring errors.

First, write your own documentation. Or to put it another way, if you didn’t write it, it’s not documentation. Or if you didn’t organize it, it’s not documentation. There are tools out there which claim to generate documentation, bypassing the process of writing and organizing.

Java has Javadoc. Ruby has RDoc. Python has epydoc. They all do the same kinds of things, like spitting out class definitions, function signatures, and inheritance diagrams. That’s what people usually mean when they talk about generating documentation. I’ve come to regard that idea suspiciously, because I’ve never encountered computer generated documentation which was half as effective at communicating with me than even the poorest of human-written documentation. The output from such tools is usually organized to mirror the source code input. Generated docs are structured around execution, not consumption.

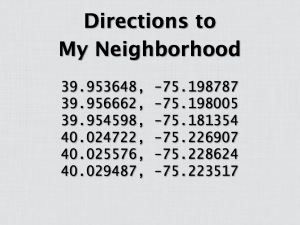

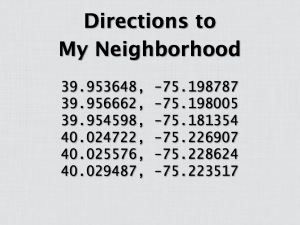

Consider this metaphor: what if you wanted to know how to get from Jon Huntsman Hall (where I originally gave this talk) to my neighborhood, Manayunk? One way to get you from point A to point B would be to dump a bunch of latitude and longitude coordinates on you. That’s technically enough information to make that journey, but it misses the point. Latitude and longitude is information about navigation, but it’s not how to actually navigate. It’s the generated documentation of navigation.

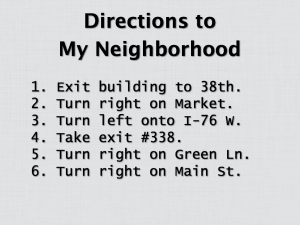

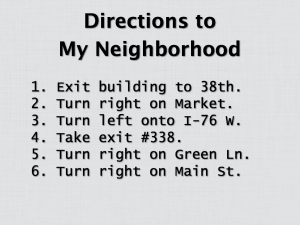

A better way to help you get from point A to point B is to incrementally build understanding and provide details which are useful to humans, not a GPS device. Better directions for people would include details structured around what the audience wants to do with the information. In this case, more wayfinding details, like the starting point (e.g., “building”), names of things along the route (e.g., “Market”), and relative directions (“turn right”). This is the kind of thing that works with real people.

What’s better still is that it works for a much larger set of audiences. While the latitude and longitude data is only good for those with GPS devices or detailed maps handy, the human-centric directions work for many people. It doesn’t matter if you know the area like the back of your hand or if you’re a visitor to Philadelphia. No one’s left out and no one’s insulted.

A lot of writers, especially in technical domains, have a fear of dumbing down their content. They don’t want to insult their audience with hand-holding. But what many of these writers forget is the incredible human propensity for filtering. People readily distill the information they need from the information available, but face a much harder challenge in inventing it themselves.

All that said, documentation generation isn’t useless. Some of these tools can actually be repurposed to produce beautiful, smart documentation. But by default, documentation generators are pretty source code viewers. They provide an alternative means for inspecting the source code, but they’re no substitute for human-written documentation.

So if documentation generators won’t do the work for you, what do you need to write? In this order, there are three things you need to write:

- Tasks

- Topics

- References

Tasks are critical. “How do I…?” is likely to be the first and last question your audience ever asks of your documentation. I once came across a piece of software that claimed, on its feature list, to solve the problem I had exactly. But I ultimately did not use that software because I could not figure out how I was supposed to install the thing (and I’m no technical slouch). If your docs can’t answer these kinds of simple, fundamental questions, that’s a failure no matter how good the rest of the materials are.

A good example of task-based documentation is the Django tutorial. The Django tutorial shows you how to make a website which lets you create polls, cast votes, and see results. The entire tutorial takes perhaps 20 or 30 minutes for someone with previous Python experience. It answers a fundamental question when presented with the Django library: how am I supposed to use this thing?

But tasks alone aren’t enough. Topic-based documentation affords the opportunity to answer questions like “What is this?” and “Why is this?” Topic docs let you create a toolkit for your audience to complete tasks which your docs don’t or can’t address specifically. Want to explain your serialization format? Talk philosophy? Describe your ticket triage procedure? Do it in the topic based documentation.

An excellent example of topic documentation is SQLite’s page Appropriate Uses for SQLite. The page describes the project’s design goals and provides example use-cases for SQLite. It’s good documentation, but it might be the single best page of documentation created by an open source project because of the following section, “Situations Where Another RDBMS May Work Better.” It’s a list of use-cases where SQLite should not be used. It’s a brilliant application of topic documentation. No audience member is going to ask “When should I reject your software?” but the topic docs still allow an opportunity to address the issue.

The final type to round out API documentation is reference material. References are for people who know what they’re looking for. References are for the finer details. References are the one and only place where a documentation generator makes sense, but even then you can often do better.

Flask, a micro web framework for Python, has a beautiful, hand-crafted API reference. It’s built with a wonderful tool called Sphinx, which pulls in relevant data from the source code (like class names and function signatures), but obligates the writer to organize the information. The result is documentation that’s organized for human consumption, but coupled to the source for accuracy.

Overall, I think good API documentation is within reach for most writers. Fancy tools, like documentation generators, aren’t required. A simple text editor will do. Take a look at Flot’s documentation. It’s a plain text file, which covers tasks, topics, and references with ease. You just have to write.

So start somewhere simple, like how to install your software or how to register for an API key or whatever simple prerequisite you’ve got. You can start today to make your users happier and bring about better living through documentation.

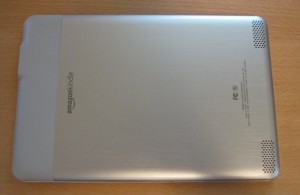

This step is a little scary while you do it. You will think you’re breaking the Kindle, but as long as you apply only gentle force, you won’t actually break anything.

This step is a little scary while you do it. You will think you’re breaking the Kindle, but as long as you apply only gentle force, you won’t actually break anything. Until you replace the back panels, keep a close eye on the volume rocker. Without the back panels to hold it into position, it may fall out.

Until you replace the back panels, keep a close eye on the volume rocker. Without the back panels to hold it into position, it may fall out.